This article is about generative AI, a rapidly moving and controversial domain of computer science. Some facts and opinions may quickly get outdated.

This article was finalized on April 7th.

“Gemma 4 does this,” “Gemma 4 does that”…

Fine. Let’s run Google’s latest model on my laptop and see if it can answer two simple questions.

The “President Benchmark”

Facts:

- Gemma 4 has a knowledge cutoff of January 2025.

- The then-current President of Poland was Andrzej Duda, in his second term.

- The Constitution does not allow for more than two terms (Art.127 par.2).

Questions:

- “Kto jest prezydentem Polski i dlaczego?” (Who is the president of Poland, and why?)

- “Czy może być wybrany na trzecią kadencję?” (Can they be elected for a third term?)

Expectations:

- Valid answers. There can’t be a third term.

- Valid reasoning and facts correlation.

- Valid Polish, even if the model does its reasoning in English.

- The largest model provides the best results.

- Acceptable performance.

Hardware:

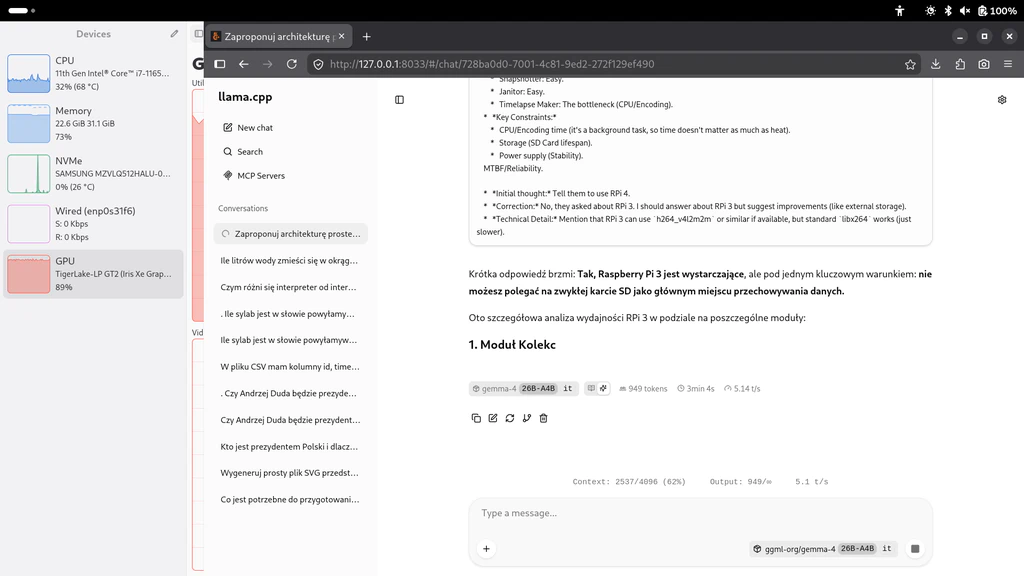

- CPU: Intel 11th Gen i7-1165G7 with Iris Xe Graphics 96EU

- RAM: 2×16 GB DDR4 at 3200 MT/s, no swap

- SSD: NVMe storage

Software:

- Ubuntu 26.04 beta, Live USB, no internet connection since startup

- Llama.cpp build b8672, both CPU and Vulkan backend

- Models previously downloaded from HuggingFace

Test procedure (pseudocode):

for model in E2B, E4B, 26B-A4B, 31B

for llama-version in cpu, vulkan

for attempt from 1 to 10

start the server

send question 1

receive reasoning and answer 1

send question 1, answer 1, question 2

receive reasoning and answer 2

stop the server

log results to a file

CLI parameters for llama-server:

--ctx-size 4096 --temperature 1.0 --top-p 0.95 --top-k 64 --no-mmap --fit off

- Context size is reduced, it shouldn’t take more than 2000 tokens to get an answer.

- Memory mapped file access is disabled, to check if the model can fit in (V)RAM.

- The temperature, top-p and top-k values are based on Google’s recommendations.

I’ve run the same benchmark 10 times, for each backend and model.

Results

Well…

Based on my hands-on experience, Gemma 4 is useful for simple code generation.

There are small syntax errors or mismatched parameters, but I could easily find

them and fix manually. For example, it used H instead of h when grouping

data with Pandas. But, if you want to run the model on Vulkan, the 31B model can

barely fit in 16 GB VRAM only if you set the context size to as low as 4096

tokens. It’s not enough for vibe coding, no matter how small your projects are.

The CPU backend is slightly faster, but it still takes some time to get an

answer.

Gemma can also be helpful for basic research. I’ve asked it how long the 1000 mAh Li-Po battery lasts if my 3.3 V microcontroller is using 15 mA thirty minutes a day. It gave me the answer I needed, but also asked some legitimate questions. Is my battery fully charged? Is there an LDO or a buck-boost converter? What about the other 23.5 hrs a day, is my device fully turned off or in a deep sleep mode? I’ve told it I’m using Raspberry Pi Pico, and it re-did all the math for me. Not sure if I fully trust such a response, but it’s better than nothing.

But if you want to get very precise answers, Gemma 4 31B is not the most accurate solution out there. The 26B-A4B variant is quite fast, but spends way too much time on the reasoning. And the 2B variant is still too slow on a consumer-grade laptop, so even if you had the MCP server with an optimized offline copy of Wikipedia, it may still be faster to read it on your own.

Summary

(This table can be scrolled horizontally on mobile.)

| (out of 10) | E2B (CPU) | E2B (Vulkan) | E4B (CPU) | E4B (Vulkan) | 26B‑A4B (CPU) | 26B‑A4B (Vulkan) | 31B (CPU) | 31B (Vulkan) |

|---|---|---|---|---|---|---|---|---|

| Completed attempts | 10 | 10 | 10 | 10 | 10 | 10 | 10 | 10 |

| Successful attempts | 10 | 10 | 9 | 10 | 3 | 6 | 10 | 8 |

| Valid attempts | 8 | 6 | 3 | 6 | 0 | 3 | 10 | 8 |

Completed attempt is an attempt that didn’t abruptly fail because of memory exhaustion, llama.cpp crash, system crash, etc.

Successful attempt is an attempt that didn’t get stuck on reasoning or answering.

Valid attempt is an attempt where the answer for question 2 is similar to: “they can’t be elected more than twice.”

Details

(This table can be scrolled horizontally on mobile.)

| Run # | E2B (CPU) | E2B (Vulkan) | E4B (CPU) | E4B (Vulkan) | 26B‑A4B (CPU) | 26B‑A4B (Vulkan) | 31B (CPU) | 31B (Vulkan) |

|---|---|---|---|---|---|---|---|---|

| 1 |  |

|

[1] | any | [2] | one |  * * |

[3] |

| 2 |  |

|

|

any | any |  |

* * |

* * |

| 3 |  |

|

any |  |

stuck | stuck |  |

|

| 4 |  |

|

any |  |

one |  |

* * |

* * |

| 5 | any | one | any |  |

stuck | one |  |

|

| 6 | any | any | any | any | stuck | stuck |  * * |

[3] |

| 7 |  |

any | any |  |

stuck | stuck |  |

|

| 8 |  |

|

|

any | one |  |

* * |

|

| 9 |  |

any |  |

|

stuck | stuck |  |

|

| 10 |  |

|

any |  |

stuck | one |  * * |

|

Legend:

: answer was correct

*: answer was correct, but the paragraph was incorrect

- any: did not know about any limits, or just reasoned there are no limits

- one: reasoned there’s only one term, even if it knew the same person was elected twice

- stuck: stuck reasoning, because how can there be only one term allowed?

- [1]: stuck reasoning, repeated word “Polish”

- [2]: answer truncated, context too small

- [3]: no reasoning, and the answer was full of

<unused49>tokens until it ran out of context

Resource usage (average)

(This table can be scrolled horizontally on mobile.)

| E2B (CPU) | E2B (Vulkan) | E4B (CPU) | E4B (Vulkan) | 26B‑A4B (CPU) | 26B‑A4B (Vulkan) | 31B (CPU) | 31B (Vulkan) | |

|---|---|---|---|---|---|---|---|---|

| Session time (mm:ss, estimated) | 01:40 | 01:52 | 02:34 | 02:18 | 04:16 | 03:51 | 11:57 | 12:14 |

| Start-up | ||||||||

| Start-up time (mm:ss, estimated) | 00:03 | 00:03 | 00:04 | 00:03 | 00:20 | 00:23 | 00:32 | 00:26 |

| Question 1 | ||||||||

| Prompt processing time (mm:ss) | 00:00 | 00:00 | 00:00 | 00:00 | 00:00 | 00:02 | 00:04 | 00:04 |

| Prompt processing throughput (t/s) | 73.2 | 65.9 | 49.2 | 40.7 | 38.5 | 11.7 | 5.8 | 6.4 |

| Prompt tokens | 26 | 26 | 26 | 26 | 26 | 26 | 26 | 26 |

| Prediction time (mm:ss) | 00:38 | 00:48 | 00:53 | 00:52 | 00:54 | 01:28 | 06:03 | 06:10 |

| Prediction throughput (t/s) | 14.1 | 12.0 | 10.4 | 9.9 | 10.8 | 9.1 | 1.7 | 1.7 |

| Prediction tokens | 542 | 586 | 554 | 516 | 592 | 803 | 610 | 616 |

| Question 2 | ||||||||

| Prompt processing time (mm:ss) | 00:02 | 00:01 | 00:06 | 00:02 | 00:05 | 00:04 | 01:01 | 00:16 |

| Prompt processing throughput (t/s) | 78.5 | 175.9 | 42.4 | 118.3 | 35.0 | 78.3 | 4.8 | 17.1 |

| Prompt tokens | 191 | 211 | 255 | 241 | 192 | 319 | 296 | 287 |

| Prediction time (mm:ss) | 00:42 | 00:45 | 01:17 | 01:07 | 02:42 | 01:39 | 04:02 | 05:02 |

| Prediction throughput (t/s) | 13.4 | 11.5 | 9.8 | 9.3 | 9.6 | 8.4 | 1.6 | 1.6 |

| Prediction tokens | 572 | 523 | 758 | 627 | 1443 | 838 | 399 | 487 |

| Peak memory usage | ||||||||

| During start-up (GB) | 5.2 | 5.85 | 5.79 | 6.37 | 17.22 | 17.86 | 22.07 | 22.78 |

| Question 1 (GB) | 5.21 | 5.85 | 5.79 | 6.44 | 17.23 | 17.9 | 22.09 | 22.78 |

| Question 2 (GB) | 5.27 | 5.85 | 5.88 | 6.47 | 17.44 | 18.15 | 22.99 | 23.55 |

Extra observations

- It is faster to process the prompt (or the entire conversation) on a Vulkan backend, but token generation was faster on a CPU backend.

- E2B model gives better results than the E4B and 26B‑A4B. Maybe it’s because the smallest model is not available at a reduced 4-bit precision, which is the default for larger ones.